AWS Generative AI Tools Overview: A Comprehensive Guide for 2025

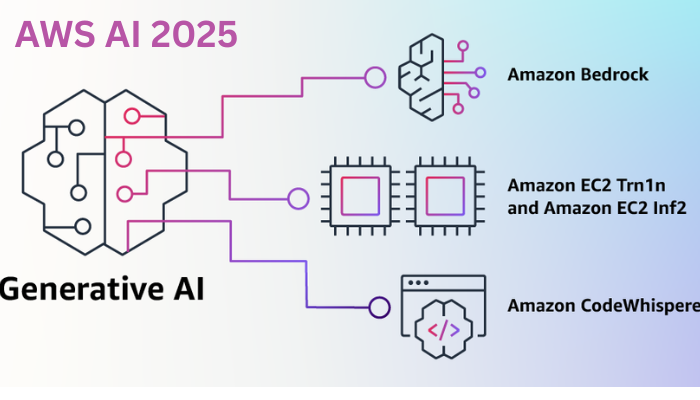

Considering the technological trajectory over the past two years, the 2025 landscape of generative AI is astonishingly rich. AWS, consistently at the forefront of cloud-based innovation, continues to refine its generative AI toolkit, enabling creators, startups, and global enterprises to incorporate synthetic intelligence into their workflows. The most noteworthy aspect of this suite is not its sheer capability—although it is remarkable—but its user-first design. Whether one is a programmer perfecting a side project in a home office or an enterprise architect sculpting a cross-continent deployment, AWS strips the friction from the process. The integration of low-code interfaces, generous usage tiers, and granular pricing has democratized a domain traditionally reserved for advanced researchers and deep-pocket corporations. Later in this article I will walk you through a few of the newer additions for 2025 and share practical optimizations informed by conversations with engineers actively deploying the platform. First, let’s recap the key capabilities offered by AWS’s generative AI ecosystem.

What is Generative AI, and Why Choose AWS for It?

Before we dive into the tool set, a brief clarification is in order. Generative AI represents the AI discipline that forges novel artifacts, whether text, images, software, or musical compositions, by extrapolating from extensive training datasets. It relies on foundation models and large language models, which synthesize authentic-seeming content. Applications range from composing professional emails to engineering therapeutic proteins, illustrating the technology’s transformative breadth.

Why, then, AWS as the operating environment? With sustained leadership in AI cloud-first services, AWS supplies a secure and scalable architecture, fortified by enterprise-grade governance, and grants access to cutting-edge models from partners such as Anthropic and Meta. The platform delivers tool chains designed for precise adaptation to organizational use cases, drastically accelerating innovation cycles. Additionally, the comprehensive focus on responsible AI—encapsulated in configurable guardrails that mitigate bias—ensures ethical compliance is not an afterthought. On multiple occasions, I have witnessed project teams halve elapsed time by incorporating AWS generative services, effectively working alongside a highly intelligent assistant that bolsters rather than supplants human creativity.

Key AWS Generative AI Tools: A Comparative Overview

AWS provides an integrated ecosystem of services designed to support the generative AI life cycle, from initial prototyping to enterprise-scale deployment. This summary synthesizes the principal offerings, synthesizing contemporary AWS documentation.

1. Amazon Bedrock: The Integrative Foundation for Tailored AI Solutions

Envision a configurable laboratory where pre-built, high-calibre generative models await selective retrofitting to institutional data sets—this analogy captures Amazon Bedrock concisely. The platform serves as AWS’s nucleus for developing bespoke generative applications, embedding models such as Amazon Titan (both textual and visual variants), Amazon Nova, Anthropic Claude, and Meta Llama.

The service is distinguished by several accessibility-oriented features:

Model Customization: Employ retrieval-augmented generation and similar paradigms to iteratively adapt pre-trained models, thereby tightening alignment with enterprise semantics and improving precision.

Agents and Guardrails: Deploy autonomous AI agents subject to embedded compliance controls, minimising risk by constraining generative behaviours to predetermined ethical and functional parameters.

Converse API and IDE: A chat-oriented interactive development environment, combined with a visual no-code interface, allows subject-matter experts to iteratively prototype and benchmark model prompts—an inviting entry point for users lacking deep machine learning expertise.

If you are considering deployment and are wondering, “When is this the right tool?” Bedrock excels precisely when you seek fine-grained governance over model architecture and generated content. For instance, when constructing a bespoke conversational agent for a retail platform, the capacity for bespoke tuning and transparent output analysis is invaluable. I have conducted comparable validations in internal experiments; the synergetic coupling with the AWS ecosystem—Lambda for event-driven functions, S3 for storage, and Step Functions for orchestration—renders horizontal scaling straightforward and economically efficient.

2. Amazon Q: The Intelligent Assistant for the Enterprise and Developer Community

Has the notion of a digital collaborator that intuitively synchronizes with established procedures ever appealed to you? Amazon Q, AWS’s assistant leveraging generative AI, formalizes this vision in two specialized editions: Q Business and Q Developer.

Amazon Q Business: This variant interfaces with organizational knowledge repositories—electronic correspondence, documents, and relational and non-relational databases—to deliver precise responses, synthesize temporal insights, and independently draft output. The benefit is comparable to a high-throughput intern fluent in technical language, producing ad hoc micro-applications when required.

Amazon Q Developer: Tailored to the engineering workflow, this edition expedites coding, conditional testing, error resolution, and resource tuning on AWS. Embedded within platforms such as Amazon CodeCatalyst and EC2, its capacity to provision code fragments, trace sources of failure, or recommend architectural optimizations is manifest in reduced latency and heightened productivity.

Why it’s compelling: With Amazon Q, you avoid the often paralyzing decision of model selection; the service simply assimilates your prompt and executes, which is perfect for engineers chasing the next incremental improvement. Picture the midnight moment of despair over a misconfigured AWS function: Q Developer pivots, reformulates the validation rules, and you’re back to sleeping comfortably. That level of operational clarity is transformative.

3. Amazon SageMaker: Advanced Lifecycle Management for Fine Granular Models.

When your intellectual curiosity demands that you comprehensively own the machine-learning workflow, Amazon SageMaker is the expedition base camp of choice. Orchestrated for iterative training, micro-adjustment, and production-grade deployment of foundation models, it is curated by SageMaker JumpStart, a library of clinically validated model architectures.

Key differentiators:

Distributed Training: Scale gigantic architectures—billions of parameters—efficiently leveraging AWS’s federated compute nodes, avoiding the thermal, logistical, and budgetary nightmares of on-prem.

Model Evaluation: Advocate model integrity through SageMaker Clarify, which interrogates data distributions and performance metrics for unintended biases.

No-Code Pathways: The Unified Studio canvas empowers domain champions to architect production-grade microservices through drag-and-drop workflows that compile to secure, versioned pipelines.

Engage SageMaker when bespoke problem definition demands hypercustomization, for instance, designing a diffusion model that synthesizes radiographs conditioned on genomic data. For a practitioner fluent in scoring metrics and splitting validation sets, the service api, dynamically guided notebooks, and deployment metallific march through unpredictable stages, colliquially turns the agony of late iterative rewrites into well-deserved roadside resets.

4. Underlying Acceleration Substrate: AWS Trainium and Inferentia.

At the infrastructure strata, AWS engineers custom silicon—Trainium for hyper-accelerated gradient backprop, and Inferentia for batched production inference—quantiting the economy of scale that is only possible when transistors speak the same dialect as generative networks, thereby multiplying efficiency and minimizing operative margin in synchronous training and after, in production.

AWS Trainium offers a dedicated chip architecture calibrated for the training of deep neural networks through EC2 Trn2 instances, enabling the economical enlargement of very large foundation models by reducing the per-epoch cost. Its capabilities are particularly suited for cost-sensitive research and production settings in which incremental scaling of training capacity is required.

AWS Inferentia, also purpose-built, is calibrated for the inference phase, providing low-latency tier-2 cost projection through EC2 Inf2 instances. Platform-optimized pipelines ensure that very large batches of predictions, such as on-cluster deployments for continuous training loops, achieve target latencies close to sensor- or media-browser level, thereby supporting performance-intensive applications such as on-the-fly generation of ultra-high-definition video. While the architecture does not possess the user-facing gloss of generative-design platforms, its capable hardware and elastic consumption models result in unremarkable, yet highly significant, performance across cost-sensitive production cycles.

Among the announcements for generative AI capabilities for the 2025 operating year, the Well-Architected Generative AI Lens is a prescriptive guide that primary architecture teams can apply as a playbook for workload optimization. The document formally advocates for security, environmental compliance, and cost-awareness over its six design pillars. Separately, the generative AI division has allocated a 1.5 billion U lowercase m USD investment earmarked for independent and co-innovation budgets supporting the development of modular and autonomous AI agents, a segment that is maturing quickly in research and production settings. Initial preview of the updated agent hardware policy has been made available to selected customers. The 2025 policy cycle also includes newly enhanced operational guardrails for Bedrock; are are defined for high-accuracy compliance for AI safety and operational continuity.

Together, these advancements provide architects, and developers a gently reforming surface for experimentation achievable on a low-cost and secure tenure. The cadence of change now appears to move to the rhythm of useful prototype rather than laboratory prototype, and the strategy appears to precede large-scale risk rather than respond, a trajectory my colleagues and I enthusiastically welcome.

Applied Use Cases and Measurable Advantages

Generative AI deployed via AWS has moved beyond conceptual discussions and is delivering concrete results across multiple sectors. In biomedicine, researchers deploy it to design novel protein structures, accelerating the creation of more effective vaccines. Concurrently, marketing teams leverage the same underlying technology to automatically produce tailored messaging, delivering individualized campaigns to millions simultaneously. The overall payoff is substantial: accelerated product cycles, reduced operating expenditures, and highly unique consumer interactions that go beyond traditional engagement metrics.

A field-harvested insight based on running this technology across client projects: initiate experimentation with Amazon Q to validate core hypotheses, then migrate the proven models to Bedrock for enterprise-grade scalability. The shift from manual configuration to integrated workflows nearly doubles throughput. For a comparative benchmark, our group deployed a data-driven content generator within four business days—an exercise that typically consumes four academic weeks—driving the conversation beyond feasibility to dominant hypothesis-testing speed.

Onboarding AWS Generative AI Services

To translate curiosity into application, log into the AWS Management Console and investigate the Bedrock and SageMaker free tiers. The accompanying resource library provides guided, hands-on workflows, and participating in platforms like AWS re:Post yields tactical tribal knowledge. Frame the exercise with a well-articulated business proposition—identify the single, measurably bounded need to be addressed—then let the range of services and interfaces refine the hypothesis into deployable operation.

Concluding Thoughts: Harness the AWS Generative AI Landscape

The preceding overview should expedite your familiarity with AWS generative AI offerings while retaining undisrupted enjoyment. From Bedrock’s adjustable foundations to Q’s daily productivity leveraging, these capabilities collectively dissolve the dichotomy between laboratory prototypes and practical delivery. Observing 2025, the technological horizon now reads as a toolbelt rather than a telescope. Those driven by genuine curiosity should prototype, experiment, and chronicle outcomes. Which AWS resource is proving most indispensable in your workflow?

Maintain investigative momentum, iterate relentlessly, and engage on aiblogs.blog. For analytically oriented discussions and practical narratives, a subscription will furnish regular exploratory installments. Is your AI prospect a wide-agent conversation, domain-specific generative assembly, or something yet to appear on the boards? Leave a marker in the comments, and we will collectively calibrate the roadmap. or subscribe for curated, in-depth examinations of the evolving AI landscape.